MCP: Breaking Data Silos, the AI Type-C

In One Line

Model Context Protocol (MCP) is an open standard for connecting large language models to external data sources, tools, and services. I think of it as the Type-C port of the AI world: a universal adapter that lets models talk to many different systems through one common interface. With MCP, an LLM can query data or trigger actions across multiple systems without every integration becoming a one-off engineering project.

Open Standards: The Common Language of Technology

Open standards are the common language of modern technology. They are shared rules that allow different devices, services, and systems to cooperate.

Take a simple example. If I send a photo from an Android phone to a friend using an iPhone, the image still opens because both devices understand formats like JPEG and PNG. Or think about charging: an Android phone and a laptop from different brands can both use the same Type-C cable because they follow the same interface standard.

That is the intuition behind MCP. It aims to do for AI integrations what Type-C did for hardware ports: replace a mess of incompatible connectors with one widely accepted interface.

Why AI Has a Data Silo Problem

AI assistants are improving fast, but even the best models hit the same bottleneck: they are trapped behind disconnected systems.

A data silo is what happens when information lives in different products and every product has its own access model, auth flow, and interface. The moment you want one assistant to read GitHub, query a database, search Slack, and fetch Google Docs, the integration burden becomes obvious.

Without MCP, this turns into a classic engineering nightmare:

- every data source needs its own integration

- every auth model must be wired separately

- every change duplicates work across multiple parts of the stack

- every additional connector increases security and maintenance complexity

That is why MCP matters. It is not just another protocol. It is an attempt to eliminate integration fragmentation at the infrastructure level.

What MCP Actually Is

MCP is an open standard that defines a common way for AI systems to access resources and capabilities from external systems.

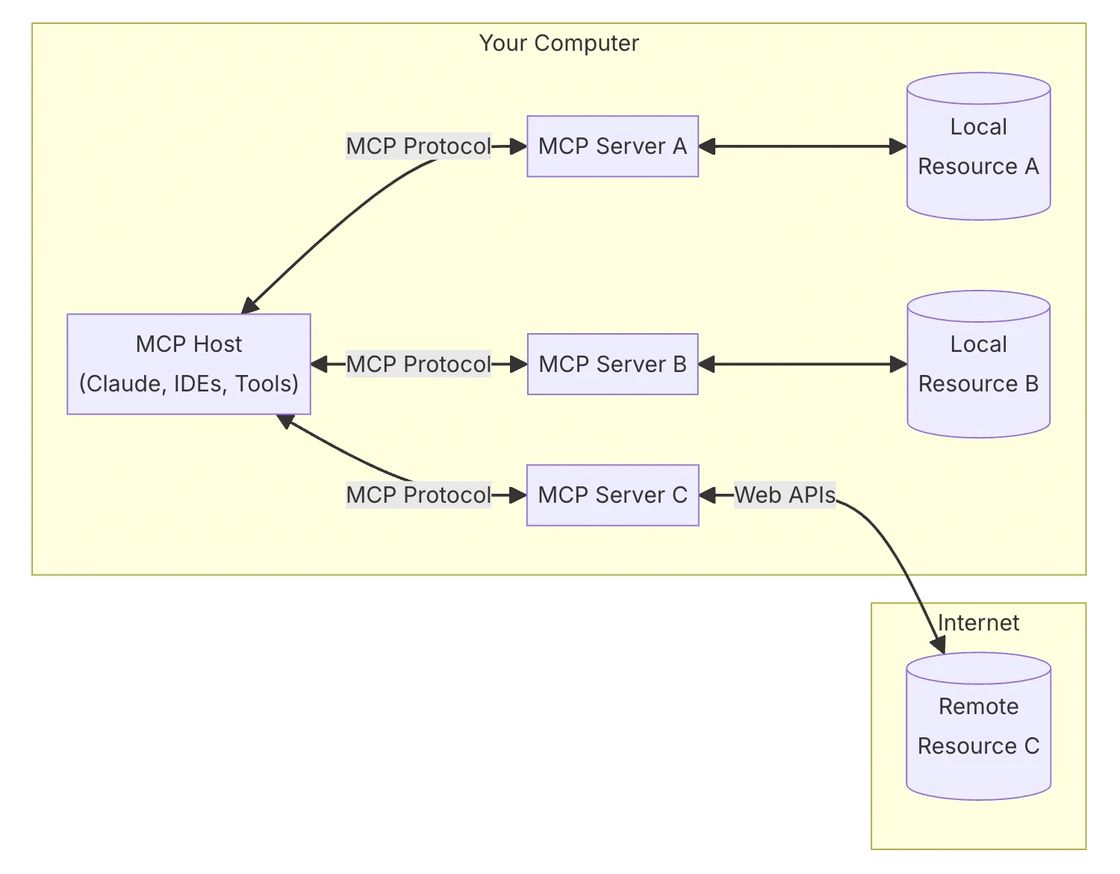

At a high level, MCP uses a host-client-server structure:

- Host: the application that wants to use external capabilities, such as Claude Desktop, an IDE, or another AI tool

- Client: the MCP-aware component inside that application that sends requests

- Server: a lightweight program that exposes specific capabilities and connects to local or remote resources

With this architecture, the AI application no longer needs to understand the implementation details of every underlying data source. It only needs to speak the standard protocol.

How MCP Breaks Information Isolation

MCP helps in four concrete ways:

-

A unified protocol

Every data source no longer requires a custom integration path. If a tool or system speaks MCP, it becomes accessible through the same interaction model. -

Simpler access to external systems

Instead of rebuilding connectors again and again, developers can plug new systems into a standard interface and move faster. -

Better security boundaries

MCP is designed with authorization and controlled access in mind, so the application interacts through explicit, governed interfaces instead of ad hoc backdoors. -

Flexible extensibility

The same pattern works across local databases, internal tools, and cloud services. Small teams and large enterprises can both use the same abstraction.

Here is a simple mental model of the flow:

-

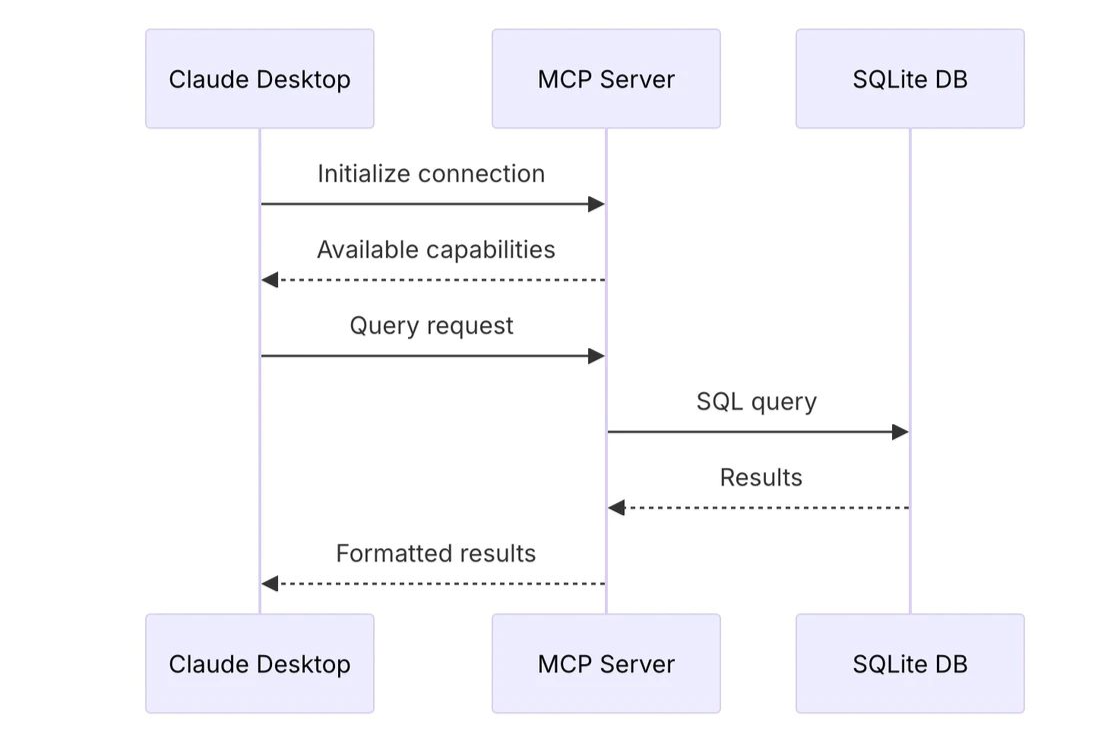

Initialize the connection

Claude Desktop or another host application connects to an MCP server. -

Negotiate capabilities

The server responds with the operations and resources it supports. -

Send a request

The host asks for a query or an action. -

Access the backend system

The MCP server translates that request into the necessary backend operation, for example a SQL query against SQLite. -

Return the result

The backend sends the result to the MCP server. -

Format the output

The MCP server turns the raw result into something the host can consume safely and consistently. -

Deliver it back to the user-facing application

The host receives the structured result and can show it to the user or pass it back into the model loop.

In that sense, MCP acts as the middle layer between an AI application and the underlying systems it wants to use. It standardizes capability discovery, query execution, and secure data exchange. The result is a stack that is more composable, more maintainable, and much easier to scale.