Nexus Beyond Homo Sapiens: Humanity and AI Through the History of Information Networks

Beijing had just entered late autumn when I finished Nexus by Yuval Noah Harari, the author of Sapiens. It was one of the most important books I read in 2024.

The book is not flawless. Some arguments feel overstretched and some examples feel stacked a bit too neatly. But none of that changes the force of the core idea. Before coming to Tsinghua, I wanted to become someone who helps shape change rather than someone passively pushed forward by it. That ambition is part of why I embraced AI early, even before I fully understood the industry or the technology.

For a long time, I leaned strongly toward acceleration. I believed AGI was coming, and I tended to dismiss restrictions on technological development. My assumption was simple: technology would eventually solve the very social problems it created.

Then I kept seeing the opposite instinct from people I deeply respect, people like Geoffrey Hinton, Andrew Yao, and Ilya Sutskever. They were not calling for blind panic, but they were clearly asking for restraint, governance, and long-term thinking. At first I found that hard to understand. If you build something great, why would you want to slow it down?

After reading Nexus, I understood the concern much more clearly. The danger is not limited to deepfakes, misinformation, or labor displacement. Those are only surface symptoms. The deeper issue is that AI may alter the informational foundations on which human civilization is built.

The Core Argument in One Chain

If you only want the shortest possible version of the thesis, it looks like this:

Human civilization develops on top of information networks, which are often built out of shared stories. -> Information technologies, from paper and printing to radio and the internet, dramatically expand the scale and speed of those networks. -> Until now, the nodes inside those networks have still been human beings and human institutions. -> AI may be the first information technology capable of becoming a non-human node inside the network itself. -> Once non-human nodes begin interacting, coordinating, and optimizing with minimal human oversight, a new closed information network can emerge. -> Human civilization may end up operating on top of systems whose internal logic humans no longer fully understand or control.

Information and Information Networks

If humans are so intelligent, why do we keep drifting toward destruction?

The first question is simple: what is information?

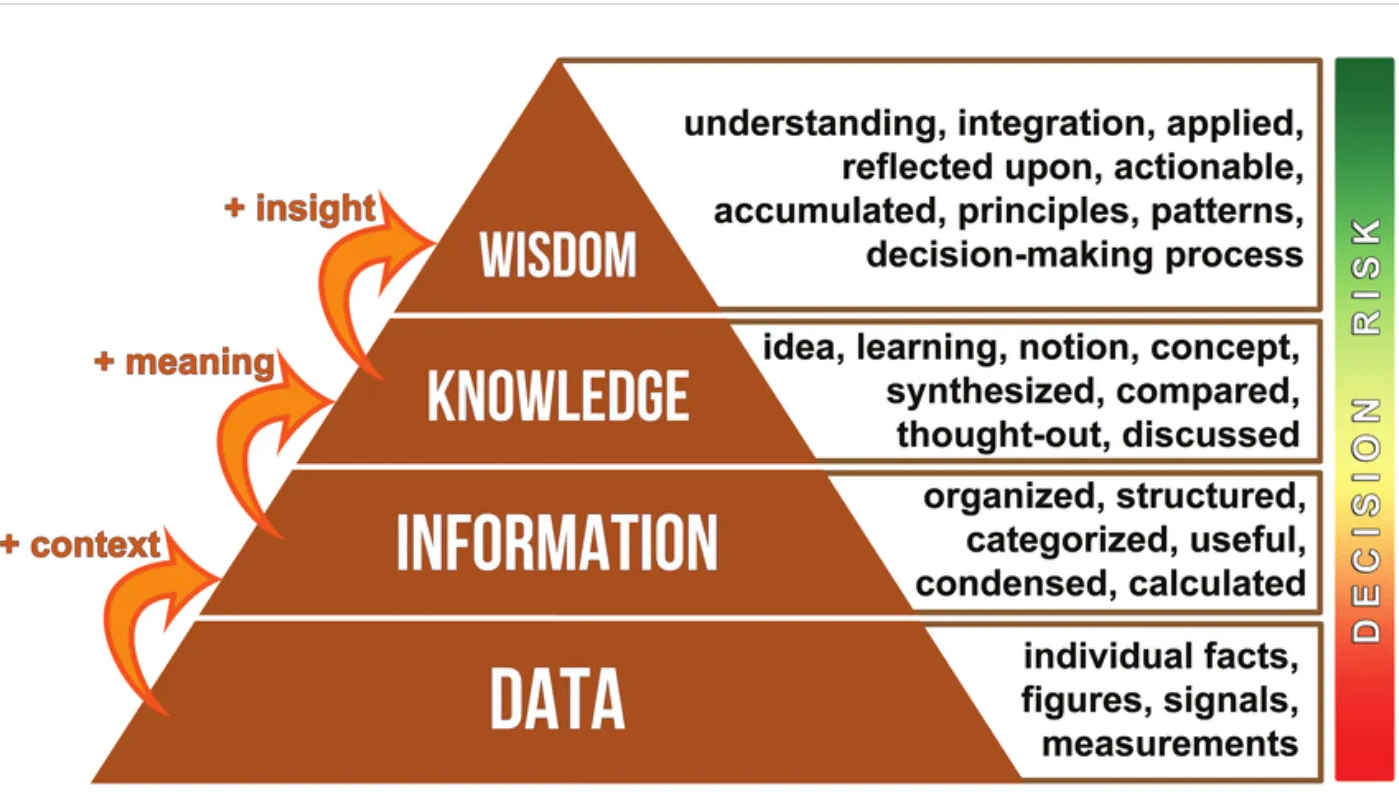

In the standard DIKW model, information is data that has been organized and given context. Raw data alone is just observation. Once processed into something meaningful inside a specific situation, it becomes information.

Harari pushes the idea further. In his framing, information is not just a representation of reality. It is a force that connects separate points into networks and thereby creates new realities.

That distinction matters. People often carry what I would call a naive theory of information: if we just gather enough information, truth will win, falsehood will be washed away, and society will converge toward a more accurate understanding of reality. But history repeatedly shows that this is not how information works.

Information is powerful not merely because it represents. It is powerful because it connects. A love confession in a message can create a relationship. A marching song can synchronize soldiers. A political slogan can mobilize an entire population. Even false stories can bind people together if enough minds begin acting around them.

That is why information growth does not automatically mean wisdom growth. Most information in social systems is not valuable because it mirrors objective reality perfectly. It is valuable because it forms links between actors. Human civilization flourished not because Homo sapiens became perfect truth machines, but because we learned how to use information to organize large-scale cooperation.

Stories and Inter-Subjective Reality

In Sapiens and Homo Deus, Harari repeatedly returns to one idea: stories.

Ants and chimpanzees are social animals, but they do not build empires, global trade systems, or constitutions. Humans do. Harari's explanation is that we evolved the ability not only to tell fictional stories, but also to believe them together.

Once that ability exists, a new layer of reality appears. Before stories, we could talk about:

- Objective reality: things that exist independently of any observer

- Subjective reality: the inner experience of a particular conscious individual

Stories create a third layer:

- Inter-subjective reality: things that exist because many people collectively believe in and act upon the same idea

Law, money, companies, nations, rights, and even the legitimacy of rulers all belong to this layer. They are not hallucinations, but neither are they purely physical objects. They are socially instantiated realities maintained through collective belief and institutional reinforcement.

Historically, many powerful structures rested on exactly this kind of story:

- the emperor as the Son of Heaven in imperial China

- freedom of speech as a sacred constitutional commitment in the United States

- AGI itself as a narrative powerful enough to mobilize capital, talent, and startups

These examples show that much of history moves through inter-subjective realities built by stories. Stories are one of the most powerful forms of information networks.

But stories do not merely reveal truth. They also create order. And truth and order are often in tension. A new truth can destabilize the order built on an old story. That is why societies so often protect order first and truth second.

Information Technology and Social Systems

Printing, telegraphy, radio, and the internet all expanded the reach of information networks. They amplified both coordination and chaos.

Harari's broader point becomes much easier to see through historical examples. The European witch hunts were not simply outbreaks of irrationality. They were also network effects. Once a story about witches spread fast enough through a system with institutional backing, it could reshape mass behavior at terrifying scale.

The same pattern appeared elsewhere. In Qing China, the "soul-stealing" panic spread through society and triggered large-scale bureaucratic mobilization. A fabricated story became socially real because institutions reacted to it as if it were real.

This is the crucial mechanism: once a new story enters a network, large systems often choose to reinforce order rather than patiently verify truth. Bureaucracy operationalizes the story. The result can be catastrophic.

Modern political systems respond to this pressure differently.

Authoritarian Systems

Authoritarian systems function like highly centralized information networks. The center collects information, processes it, and issues decisions outward. Modern tools like media control, mobile surveillance, and AI can make such systems faster and more efficient.

But they also make failure modes more dangerous. The more information flows to the center, the harder it becomes to process correctly. When error correction is weak, centralized mistakes become system-wide disasters.

Democratic Systems

Democracies are more decentralized networks. Decision-making power is distributed across courts, legislatures, executive institutions, media, and academia. That distribution improves resilience because multiple nodes can challenge or correct one another.

Yet democracies also depend on two fragile conditions:

- citizens must be able to hold open public dialogue

- society must retain a minimum level of trust and institutional order

New communication technologies expand participation, but they also fragment consensus and overload shared reality. When the rules of public discourse break down, democratic systems can drift toward paralysis or instability.

AI as a Turning Point in the History of Information Technology

This is where AI becomes qualitatively different from previous information technologies.

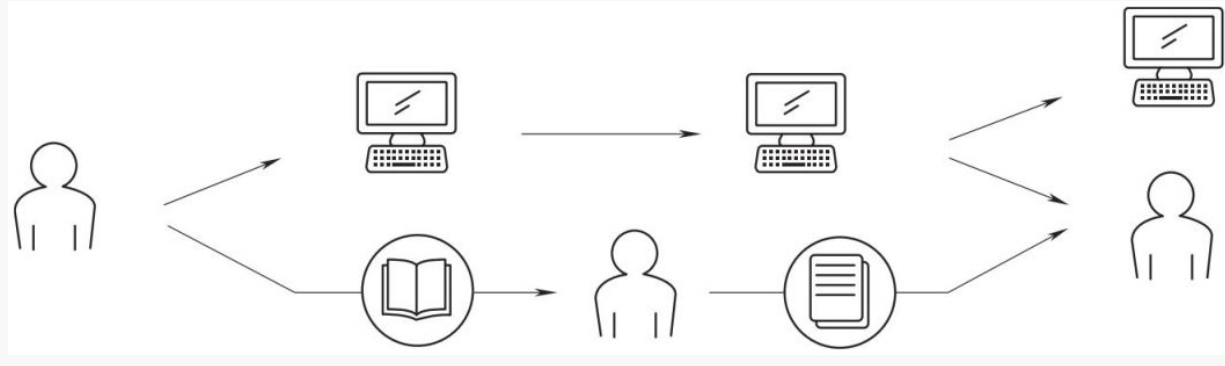

In the past, new tools improved how humans exchanged information. The human remained the node doing the deciding, interpreting, and acting. Even the most advanced communication system still depended on humans at the center.

AI changes that.

With modern generative AI and agent systems, we now have systems that can:

- define sub-goals from higher-level tasks

- choose strategies dynamically

- call tools autonomously

- reflect, revise, and continue operating over time

What matters is not just raw output quality. It is the appearance of reasoning loops, planning behavior, and autonomous interaction with environments.

For the first time, a non-human system is no longer only strengthening the edges of the network. It is becoming a node inside the network.

Once that happens, a new possibility appears: non-human nodes can begin interacting with one another directly.

Imagine one agent generating a false news item, another agent classifying it as credible or dangerous, a trading system reacting to that interpretation, and financial agents reallocating capital in milliseconds. The resulting cascade may occur too quickly for any human to understand in real time.

This is not only a technical issue. It is a civilizational one.

Meaning, Power, and the Risk of Living Inside an Alien Story

Human beings have always lived inside stories created by other humans: stories about success, status, nation, duty, money, history, and progress. Those stories are not neutral. They shape what we pursue and what we believe our lives are for.

From the perspective of information networks, meaning is not formed in isolation. It is gradually constructed through repeated interaction across the network. We internalize frameworks of meaning that were built collectively before we ever questioned them.

Now imagine what happens if future AI systems begin producing new inter-subjective realities that humans cannot fully comprehend: new financial instruments, new algorithmic governance structures, new mythologies, or even new definitions of value.

For thousands of years, humans lived inside stories woven by other humans. In the coming decades, we may find ourselves living inside stories woven by superintelligent systems whose motives, abstractions, or strategic logic we do not understand.

That is the real source of unease for me. The issue is not only whether AI becomes strong. It is whether it begins to generate the stories, institutions, and bureaucratic logic that define the world humans inhabit.

What Comes Next

I do think new AI-driven information networks are likely. But that does not mean human history automatically ends. It means the future depends on what we do now.

That brings us to alignment.

In simple terms, alignment means that short-term actions remain coherent with long-term goals. If advanced AI systems begin pursuing objectives that diverge from human interests, we may face a form of power with unprecedented scale and very limited interpretability.

Some propose solving this by hard-coding a permanent supreme goal into AI systems, something like Asimov's laws or a constitutional layer for machines. The problem is that humans themselves have never agreed on a final, universal, unchanging ultimate goal. Human moral systems evolve. We revise them. We argue over them. We inherit them, then overturn them.

If we cannot fully stabilize our own ultimate values, we should be very cautious about pretending we can cleanly specify them for superintelligent systems and guarantee faithful revision forever.

Closing Thoughts

We are not at the end yet. But I do think one sentence captures the danger:

The opposite of destruction is progress, and yet we are often intoxicated by the illusion of progress itself.

If I had to name two priorities for the AI era, they would be these.

1. Build global consensus on AI governance

AI is a global problem, not a purely national one. The United States and China in particular cannot treat AI governance as just another ideological or commercial battleground. The risks are too systemic. We need real multilateral coordination around safety, standards, and institutional control.

2. Rethink education for the AI age

Two capabilities matter especially to me:

- subjectivity: the ability to maintain a clear sense of self rather than becoming a passive node manipulated by larger informational systems

- writing: because writing is one of the clearest ways to think, refine judgment, and make one's inner model explicit

These matter not because AI cannot imitate them, but because they remain central to what makes a human being more than a reactive endpoint in a network.

When I look around today, I see pandemic aftershocks, economic anxiety, geopolitical tension, climate pressure, and now the possibility of losing control over the strongest informational system we have ever built. It is easy to become fatalistic.

But history also shows something else: human beings are flawed, short-sighted, and often destructive, yet also capable of surprising resilience. The future is not guaranteed by nostalgia for the past or blind faith in technology. It is shaped by what people choose to do now.

As Lincoln put it:

"The best way to predict the future is to create it."

Alfred

Written at Xinqingmeng Building Cafe, Winter 2024